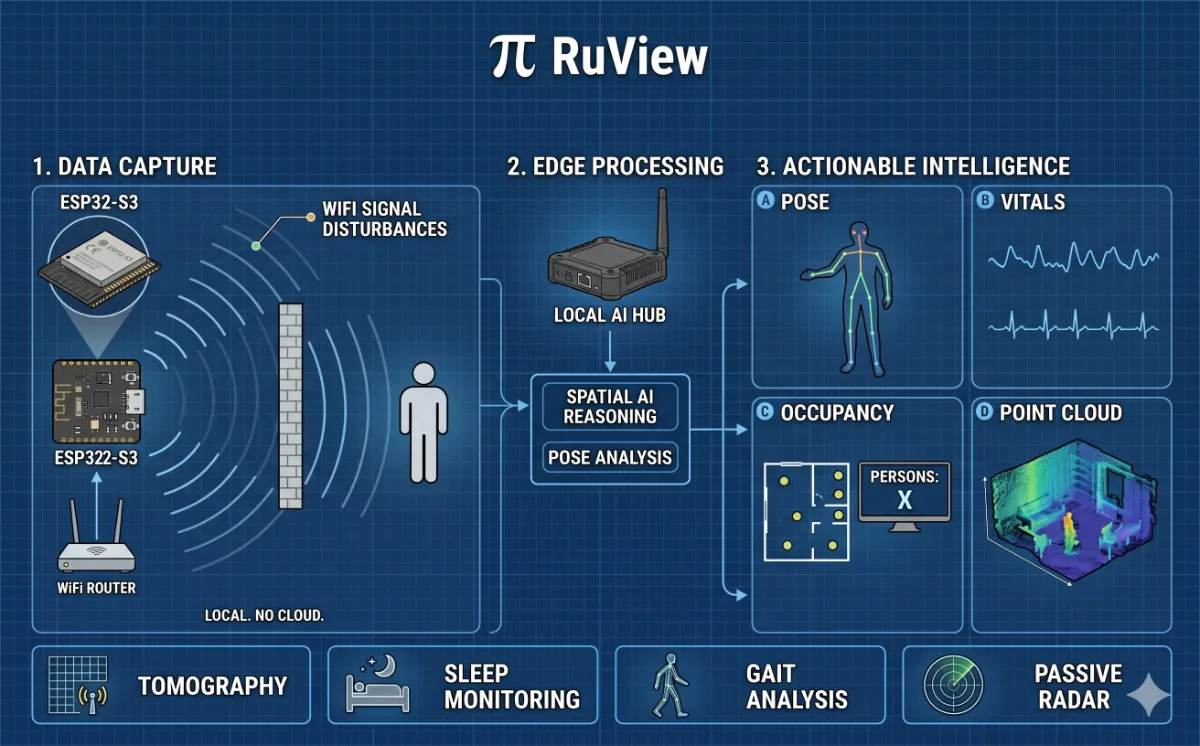

Right now, the WiFi router in your room is flooding the air with radio waves. Those waves bounce off your walls, your furniture, and your body. Every breath you take, every step you make, distorts the signal in a way that is measurable, repeatable, and deeply informative. RuView, an open-source project with over 48k+ GitHub stars, turns that distortion into something extraordinary: real-time human pose estimation, breathing detection, heart rate monitoring, and presence sensing, all without a single pixel of video.

This is not science fiction. It is not even particularly new science. But RuView is the first production-grade, fully open-source implementation that makes this technology genuinely accessible, running on hardware that costs about as much as a cup of coffee, with zero cloud dependency, zero ongoing fees, and a one-command Docker setup that gets you to live sensing in thirty seconds.

From a Carnegie Mellon Research Paper to a $9 Microcontroller

The underlying science that powers RuView has its roots in academic research, most notably Carnegie Mellon University’s work on DensePose From WiFi, which showed that Channel State Information (CSI), the per-subcarrier amplitude and phase data that WiFi hardware captures, carries enough information about a room’s occupants to reconstruct human body pose. The key insight is elegant: WiFi routers already saturate indoor spaces with radio energy. Human bodies are mostly water. Water absorbs and scatters radio waves in highly characteristic ways.

RuView takes that academic concept and turns it into something a developer can actually run. Built on top of a Rust-based signal processing engine called RuVector, the project delivers a complete edge AI perception system that learns from its environment, adapts to new rooms without retraining, and runs 54,000 frames per second on commodity hardware. The Rust rewrite alone represents an 810x speedup over the original Python implementation.

“Perceive the world through signals. No cameras. No wearables. No Internet. Just physics.”

How It Works: From Radio Waves to Body Keypoints in Microseconds

The signal processing pipeline is where RuView does its most impressive work. A mesh of ESP32-S3 sensor nodes, typically four to six units costing about $8 each, captures CSI data across three WiFi channels simultaneously. This gives the system 168 virtual subcarriers per link to work with. Here is what happens to that data in real time:

Step 1: Raw CSI capture. The ESP32-S3 mesh streams 20 Hz CSI frames across channels 1, 6, and 11 via a time-division multiplexing protocol.

Step 2: Multi-band fusion. Three channels times 56 subcarriers yields 168 virtual subcarriers per link, blended via attention weighting to extract maximum spatial information.

Step 3: Signal cleaning. Hampel outlier rejection, SpotFi phase correction, Fresnel zone modeling, and band-pass vital decomposition strip away environmental noise and multipath artifacts.

Step 4: AI backbone. RuVector’s attention networks, graph algorithms, and sparse solvers replace all hand-tuned thresholds with data-driven inference that adapts automatically to each environment.

Step 5: Neural inference. Processed signals map to 17 COCO body keypoints, breathing rate between 6 and 30 breaths per minute, and heart rate between 40 and 120 beats per minute.

Step 6: Real-time output. Pose skeleton, vital signs, room fingerprint, and drift alerts, all produced in under 100 microseconds per frame.

The coherence gate, a component that accepts, rejects, or flags measurements based on signal quality, keeps the system stable for days at a time without manual tuning. And crucially, no training cameras are required. The self-learning system bootstraps entirely from raw WiFi data, building a model of each room’s unique RF signature over roughly ten minutes of passive observation.

Perhaps the most striking capability is contactless vital sign monitoring. By applying bandpass filters to the CSI signal and running FFT-based peak detection, RuView extracts breathing rates and heart rates through walls, from a $9 microcontroller, with sub-100-millisecond latency.

What RuView Can Actually Do

Through-wall human detection. WiFi penetrates concrete, drywall, furniture, and debris. RuView detects presence and motion up to 5 meters through solid walls using Fresnel zone geometry and multipath modeling.

Contactless vital sign monitoring. Breathing rate and heart rate are detected without any body contact or wearable device. This is directly useful for sleep monitoring, elderly care, and monitoring non-critical hospital patients.

Real-time pose estimation. Seventeen COCO body keypoints are reconstructed from WiFi CSI at 54,000 frames per second in the Rust pipeline. Multi-person tracking has no hard software limit, with physics setting the practical ceiling at around three to five people per access point.

Self-supervised learning. The 55 KB on-device model teaches itself from raw WiFi data with no labeled training sets, no cameras, and no human supervision required.

Privacy by design. No video is captured or stored at any stage of the pipeline. This sidesteps GDPR video consent requirements and HIPAA imaging rules entirely, by construction rather than by policy.

Fully local, zero cloud. The system runs entirely on an ESP32 microcontroller. No internet connection, no API keys, no recurring fees.

Where WiFi Sensing Changes Everything

The applications for this technology span a remarkable range of industries. Because WiFi infrastructure is already deployed almost everywhere, and because the sensing layer adds nothing more than an $8 ESP32 node to an existing network, the cost calculus is completely different from camera-based systems.

Healthcare: Elderly fall detection, sleep apnea monitoring, and hospital patient breathing rate tracking, all without requiring patients to wear anything or consent to video recording.

Retail: Real-time foot traffic heatmaps, queue length estimation, and dwell time by zone, with no cameras and no GDPR consent overhead.

Smart buildings: True occupancy data for HVAC and lighting systems. Operators report 15 to 30 percent energy savings when conditioning systems respond to real presence rather than scheduled timers.

Search and rescue: The WiFi-Mat disaster module detects survivors through rubble using breathing signatures, classifies injury severity using the START triage protocol, and provides three-dimensional localization through up to 30 centimeters of concrete.

Industrial safety: Forklift proximity alerts, confined space monitoring, and cobot safety zones that work through shelving, pallets, dust, and smoke where cameras fail.

Smart home: Room-level presence for lights, HVAC, and audio, with through-wall coverage from two or three nodes eliminating the dead zones that plague passive infrared sensors.

The privacy advantage here is structural, not just a policy decision. A camera system requires consent, signage, data retention policies, and can in principle be subverted to capture identifiable imagery. A WiFi sensing system captures no images at any stage of the pipeline. There is simply no video data to protect, leak, or misuse.

65 Edge AI Modules, a Rust Pipeline, and a Self-Healing Mesh

RuView ships with 65 edge AI modules, tiny WebAssembly binaries between 5 and 30 KB each, that run directly on the ESP32 with no internet connection and decisions made in under 10 milliseconds. These cover everything from medical applications like sleep apnea detection, cardiac arrhythmia monitoring, and seizure detection, to security applications like intrusion detection, perimeter breach, and panic motion classification, to exotic research applications including sleep stage classification, emotion detection via micro-movements, and sign language recognition over WiFi.

The Rust workspace comprises 15 published crates available on crates.io. The signal processing layer implements six state-of-the-art algorithms including SpotFi, Widar 3.0, and FarSense. The neural inference backend supports ONNX, PyTorch, and Candle. Models are packaged as single-file .rvf containers with Ed25519 cryptographic signing, progressive three-layer loading, and SIMD-accelerated quantization.

The multistatic mesh security layer encrypts all node-to-node communication, includes replay attack detection, and self-heals when nodes drop offline or are physically moved. An independent capability audit verified 1,031 Rust tests passing against real algorithm implementations with zero mocks.

Getting started takes thirty seconds. The Docker image requires no toolchain and no hardware beyond a laptop with a WiFi adapter. Pull the image, run the container, and open localhost:3000 for a live sensing dashboard. For those ready to go further, three to six ESP32-S3 boards connected to an existing WiFi router unlock the full capability stack for roughly $50 total.

The Broader Implications of Ambient Sensing Without Cameras

We are in the middle of a quiet infrastructure transition. The smart home, the smart hospital, the smart office — these visions have stalled partly because camera-based sensing carries enormous social and regulatory friction. People do not want cameras in their bedrooms, their hospital rooms, or their changing areas. Regulators increasingly agree.

WiFi-based sensing sidesteps this friction at the physics level. The signal that senses you cannot, even in principle, capture your face. It cannot distinguish skin tone or read the text on your shirt. What it can do, with remarkable precision, is tell you that someone is in a room, that they are breathing normally, that they fell three minutes ago, that the chair near the window has been occupied for six hours straight.

RuView makes this technology available to any developer with a GitHub account and a spare weekend. The fact that it runs on a $9 microcontroller, requires no cloud infrastructure, and achieves 810x the throughput of its Python predecessor suggests that we are not far from a world where every WiFi access point is also a passive health and safety sensor, by default and by design.

The most important sensors of the next decade will not look like sensors at all. They will look like the WiFi routers we already have everywhere.

The project is MIT licensed, actively maintained, and has attracted over 40,000 stars on GitHub. Whether you are building fall detection for an aging population, energy management for a commercial building, or disaster response tools for first responders, RuView offers a foundation that is genuinely production-ready.

How to Explore RuView

The fastest path to a working demo is the Docker image, which pulls in under two minutes and requires no hardware beyond a laptop with a WiFi adapter. The live Observatory visualization at ruvnet.github.io/RuView demonstrates the full sensing pipeline with synthetic data in any browser. The GitHub repository at github.com/ruvnet/RuView includes 62 Architecture Decision Records, 7 domain-driven design models, and a complete user guide covering installation through custom model training.

For those ready to move beyond simulation, three to six ESP32-S3 boards connected to an existing WiFi router unlock the full capability stack for roughly $50 total, about the cost of a single smart home camera with a monthly subscription, none of the footage, and dramatically more useful information.